EU lawmakers are eyeing risk-based rules for AI, per leaked white paper – TechCrunch

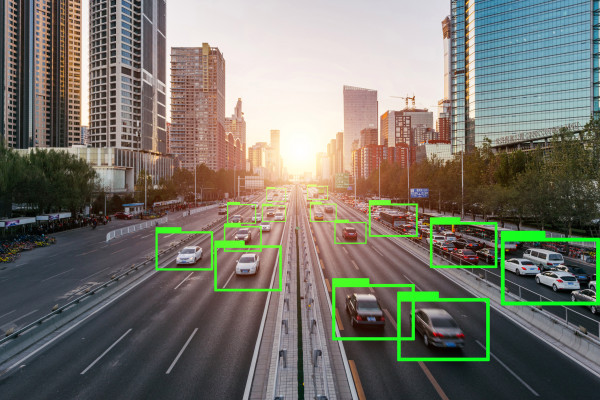

The European Commission is taking into consideration a short-term ban on the use of facial recognition know-how, in accordance to a draft proposal for regulating synthetic intelligence obtained by Euroactiv.

Creating principles to assure AI is ‘trustworthy and human’ has been an early flagship coverage promise of the new Commission, led by president Ursula von der Leyen.

But the leaked proposal indicates the EU’s government entire body is in point leaning towards tweaks of present regulations and sector/application specific hazard-assessments and needs, fairly than just about anything as agency as blanket sectoral needs or bans.

The leaked Commission white paper floats the idea of a 3-to-five-calendar year time period in which the use of facial recognition technological innovation could be prohibited in general public areas — to give EU lawmakers time to devise ways to assess and control threats all over the use of the technology, these as to people’s privateness legal rights or the threat of discriminatory impacts from biased algorithms.

“This would safeguard the legal rights of folks, in unique in opposition to any achievable abuse of the technology,” the Commission writes, including that: “It would be needed to foresee some exceptions, notably for pursuits in the context of investigate and improvement and for stability uses.”

Having said that the textual content raises fast problems about imposing even a time-restricted ban — which is explained as “a considerably-achieving evaluate that may possibly hamper the progress and uptake of this technology” — and the Commission goes on to condition that its choice “at this stage” is to depend on current EU information safety guidelines, aka the Common Facts Protection Regulation (GDPR).

The white paper includes a variety of selections the Commission is nevertheless thinking of for regulating the use of artificial intelligence a lot more usually.

These assortment from voluntary labelling to imposing sectorial requirements for the public sector (which includes on the use of facial recognition tech) to required danger-centered requirements for “high-risk” applications (these as within risky sectors like health care, transportation, policing and the judiciary, as very well as for purposes which can “produce lawful outcomes for the specific or the legal entity or pose threat of injury, death or significant material damage”) to targeted amendments to existing EU merchandise safety and legal responsibility laws.

The proposal also emphasizes the require for an oversight governance routine to guarantee guidelines are adopted — even though the Fee indicates leaving it open to Member States to choose no matter whether to count on present governance bodies for this undertaking or make new ones dedicated to regulating AI.

For every the draft white paper, the Commission says its desire for regulating AI are selections 3 mixed with 4 & 5: Aka required danger-based mostly specifications on developers (of no matter what sub-set of AI apps are considered “high-risk”) that could consequence in some “mandatory criteria”, blended with pertinent tweaks to current products protection and legal responsibility legislation, and an overarching governance framework.

Therefore it appears to be leaning to a fairly mild-contact strategy, focused on “building on current EU legislation” and creating app-certain guidelines for a sub-set of “high-risk” AI apps/utilizes — and which most likely won’t stretch to even a short term ban on facial recognition technological innovation.

Considerably of the white paper is also choose up with discussion of techniques about “supporting the development and uptake of AI” and “facilitating access to data”.

“This danger-primarily based strategy would aim on parts exactly where the general public is at threat or an significant legal curiosity is at stake,” the Commission writes. “This strictly qualified approach would not increase any new further administrative stress on apps that are considered ‘low-risk’.”

EU commissioner Thierry Breton, who oversees the internal sector portfolio, expressed resistance to developing procedures for artificial intelligence final yr — telling the EU parliament then that he “won’t be the voice of regulating AI“.

For “low-risk” AI applications, the white paper notes that provisions in the GDPR which give individuals the suitable to get details about automatic processing and profiling, and established a necessity to carry out a information safety affect assessment, would implement.

Albeit the regulation only defines constrained legal rights and constraints over automatic processing — in situations exactly where there’s a lawful or equally significant influence on the individuals associated. So it’s not distinct how extensively it would in fact implement to “low-risk” apps.

If it’s the Commission’s intention to also depend on GDPR to control better possibility things — these as, for example, law enforcement forces’ use of facial recognition tech — alternatively of creating a much more specific sectoral framework to restrict their use of a remarkably privateness-hostile AI technologies — it could exacerbate an by now confusingly legislative photo in which law enforcement is anxious, in accordance to Dr Michael Veale, a lecturer in electronic legal rights and regulation at UCL.

“The condition is exceptionally unclear in the space of regulation enforcement, and particularly the use of public personal partnerships in law enforcement. I would argue the GDPR in exercise forbids facial recognition by non-public businesses in a surveillance context with out member states actively legislating an exemption into the legislation making use of their powers to derogate. On the other hand, the merchants of question at facial recognition firms desire to sow significant uncertainty into that area of regulation to legitimise their companies,” he told TechCrunch.

“As a consequence, extra clarity would be incredibly welcome,” Veale extra. “The difficulty isn’t restricted to facial recognition however: Any type of biometric monitoring, such a voice or gait recognition, really should be coated by any ban, due to the fact in follow they have the same influence on folks.”

An advisory human body established up to advise the Commission on AI coverage set out a range of recommendations in a report previous calendar year — including suggesting a ban on the use of AI for mass surveillance and social credit score scoring methods of citizens.

But its suggestions had been criticized by privateness and rights gurus for slipping quick by failing to grasp broader societal power imbalances and structural inequality challenges which AI dangers exacerbating — such as by supercharging existing legal rights-eroding business enterprise types.

In a paper past calendar year Veale dubbed the advisory body’s operate a “missed opportunity” — composing that the team “largely dismiss infrastructure and power, which really should be a person of, if not the most, central problem all-around the regulation and governance of knowledge, optimisation and ‘artificial intelligence’ in Europe likely forwards”.

Resource connection

The 5 Best & 5 Worst Cenobites In The Whole Franchise

The 5 Best & 5 Worst Cenobites In The Whole Franchise  When is it time to upgrade your phone?

When is it time to upgrade your phone?  93% of Chinese minors are now online – TechCrunch

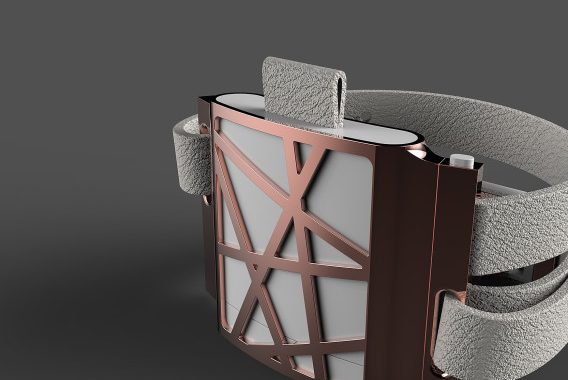

93% of Chinese minors are now online – TechCrunch  UK femtech startup Astinno, which is working on a wearable to combat hot flushes, picks up grant worth $450k – TechCrunch

UK femtech startup Astinno, which is working on a wearable to combat hot flushes, picks up grant worth $450k – TechCrunch  BMW axes plans to bring electric iX3 SUV to US – TechCrunch

BMW axes plans to bring electric iX3 SUV to US – TechCrunch  Why you shouldn’t have sex on the first date

Why you shouldn’t have sex on the first date  7 Tips for installing a security door in your home

7 Tips for installing a security door in your home  Watch Charli XCX Crawl Out of a Grave for “Good Ones” Performance on Fallon

Watch Charli XCX Crawl Out of a Grave for “Good Ones” Performance on Fallon